The race to build AI infrastructure is running into an energy grid that was never designed for it. Understanding this tension reveals why the intersection of AI and energy is one of the most consequential spaces to build and invest in right now.

Energy is one of the world's oldest industries. It is also increasingly the defining bottleneck of the newest one. As AI scales from research labs into production infrastructure, it is running headfirst into a physical constraint that no amount of software engineering can abstract away: the grid. The grid was designed for steady, predictable demand. Not for the concentrated, high-intensity loads of AI data centres arriving faster than utilities can plan, permit, or connect capacity.

This tension is what makes AI in energy one of the most interesting investment areas right now. And understanding it well requires holding two seemingly contradictory ideas at once. AI is both a massive new source of energy demand and one of the most powerful tools we have to manage and build energy systems faster. The snake is eating its own tail, but at the infrastructure scale.

On the demand side, the numbers are striking.

Data centres already consume around 3% of total electricity in the EU, heading toward 4.5% by 2030. In major hubs like Frankfurt, Amsterdam, and Dublin, the local impact is far more pronounced. Data centers in Ireland now consume more than one-fifth of the country’s total electricity supply. Both Dublin and Amsterdam have had to pause all new data centre connections. Frankfurt faces lead times of up to 13 years for connections.

It takes up to 13 years to connect a data centre to the grid. GPT-4 didn’t exist 3 years ago.

In the US, spending on data-center construction surpasses general office-building construction for the first time in 2025. And crucially, every dollar invested in a data centre is far more energy-intensive than a comparable real estate investment. A typical AI-focused hyperscale facility can exceed 100 MW of power demand, enough to supply 100,000 households. The largest facilities now being planned require 20 times that much.

Europe will absorb a significant wave of this capital coming from across the Atlantic. The EU has set a goal of tripling data centre capacity within five to seven years, though most forecasts suggest a doubling by 2030 is more realistic. Getting there requires not just building facilities but connecting them to grids that were not designed for this scale or the speed of demand growth.

This is the chicken-and-egg problem at the heart of AI infrastructure: energy is becoming a bottleneck for AI to scale, while AI is one of the most capable tools we have to fix it.

When considering applying AI to energy, it helps to be honest about what makes this sector fundamentally different from applying AI to, say, marketing software.

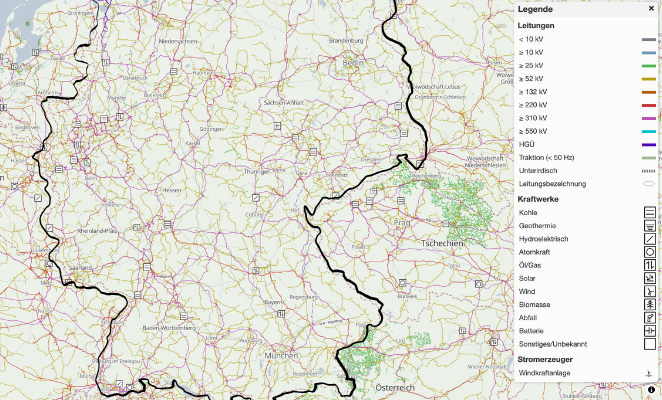

Grid projects take 10 to 20 years from planning to operation. These are safety-critical, heavily regulated, long-lived systems. Germany alone has over 800 decentralised distribution system operators (DSOs), each at a different stage of digitalisation, with no unified data layer across them. Building a digital twin of even a regional grid means stitching together fragmented data from dozens of operators who have limited real-time visibility into their own networks.

This fragmentation is not an accident or a failure of the industry. It is the result of decades of deliberate decentralisation and regional autonomy in grid operation. It also means that AI deployment here cannot follow the playbook from consumer tech. Data access is limited by design. Regulatory approval cycles are long.

The cost of errors is measured not in churn rates but in blackouts.

For investors, this creates both a barrier and a moat. The companies that figure out how to work within these constraints, building for regulated markets, understanding physical system dynamics, and earning trust with grid operators, will have advantages that are genuinely hard to replicate.

Despite these constraints, AI is already creating a measurable impact across the energy value chain. The applications that work today share a common characteristic: they make energy systems more predictable, more controllable, or faster to build, without requiring full autonomy or real-time system control.

In grid operations, AI-driven load forecasting and redispatch optimisation are reducing the cost and frequency of interventions that grid operators currently manage manually. As renewable penetration increases and load profiles become more volatile, accurate short-term forecasting becomes substantially more valuable.

AI is compressing timelines for site selection, permitting analysis, and yield estimation in asset and project development. What used to require months of engineering work can now run as automated simulations. This matters because Europe needs to build enormous amounts of new generation and grid infrastructure quickly, and the limiting factor is increasingly the speed of planning and permitting, not capital.

To improve storage and flexibility, battery optimisation and demand response coordination are already AI-assisted in leading markets. As battery costs continue to fall and grid-edge assets multiply, the optimisation problem becomes too complex for manual approaches.

Trading systems are perhaps the most mature application. Algorithmic and AI-driven trading has been standard in wholesale electricity markets for years. The next frontier is extending this intelligence down to flexibility aggregation and local market participation.

The pattern across all of these is that AI is augmenting existing workflows rather than replacing them. Humans remain in the loop for consequential decisions. Models generate recommendations; engineers and grid operators evaluate and act on them. This is where the industry is today, and it is a productive place to be.

Over the next three to five years, the most significant shift will be from isolated pilots to production-scale deployment. The gap between “we ran a successful proof of concept” and “this is how we operate” remains large in energy, and closing it requires both technical maturity and real organisational change inside utilities and grid operators.

The technical frontier will move toward multimodal systems that simultaneously integrate physics-based models, weather data, market signals, and real-time grid measurements. Single-modality forecasting tools are already commoditising. The defensible position is in models that understand the physical constraints of the grid, voltage limits, line ratings, stability margins, and can reason about them alongside price signals and demand forecasts.

Fine-tuning foundation models on specific asset classes is another near-term opportunity. A model trained specifically on wind turbine operational data or on the behaviour of a particular class of distribution transformer will outperform a generic model in ways that actually matter to operators making maintenance and dispatch decisions.

On the hardware side, new baseload technologies will create entirely new categories of AI applications. Small modular reactors, if they reach commercial scale in Europe, will require different operational profiles than existing nuclear plants. Ultra-deep geothermal, a technology being pioneered by companies like telura in our portfolio, can provide firm baseload at locations that would be impractical with conventional drilling. Fusion timelines remain uncertain, but if they compress, they will create entirely new engineering and operational challenges for which AI will be indispensable.

The longer-term vision is genuinely transformative: physics-aware foundation models that can control grids, storage, and generation in real time, dramatically reducing operational costs. The International Energy Agency projects that electricity demand from data centres alone will more than double to around 945 TWh globally by 2030. Managing that demand alongside the integration of variable renewables, electrified transport, and industrial loads is an extraordinary coordination problem. Autonomous energy management at infrastructure scale is not a science fiction scenario. It is the logical endpoint of the trajectory we are already on.

Companies that treat the constraints of the energy sector as design requirements rather than as obstacles excite us. The most compelling founders in this space understand that selling to a distribution system operator is fundamentally different from selling to a software-native company. They build for regulated environments. Their domain depth in power systems, meteorology, or market design creates genuine technical differentiation. And they are patient enough to navigate long sales cycles while being strategic enough to understand why a pilot at one network operator can become a land-and-expand motion across a highly fragmented market.

The market structure of European energy is frustrating for companies that want fast sales cycles. Over 800 DSOs in Germany alone, each making independent decisions, each with its own data infrastructure (or lack thereof). But this fragmentation is a gift for companies that can navigate it, because the consolidation opportunity is enormous and the first movers will have data and relationships that are very hard to replicate.

The fragmentation of European energy is a gift for companies that can navigate it.

Energy is where AI meets its hardest constraint and its biggest leverage. That combination, historically, is where the most durable companies are built, whether it was NVIDIA at the compute bottleneck, ASML at lithography limits, or TSMC at the manufacturing frontier of semiconductors.

Are you building in these or related areas? Reach out to Sebastian on LinkedIn.